Pandas/Python memory spike while reading 3.2 GB file

An answer to this question on Stack Overflow.

Question

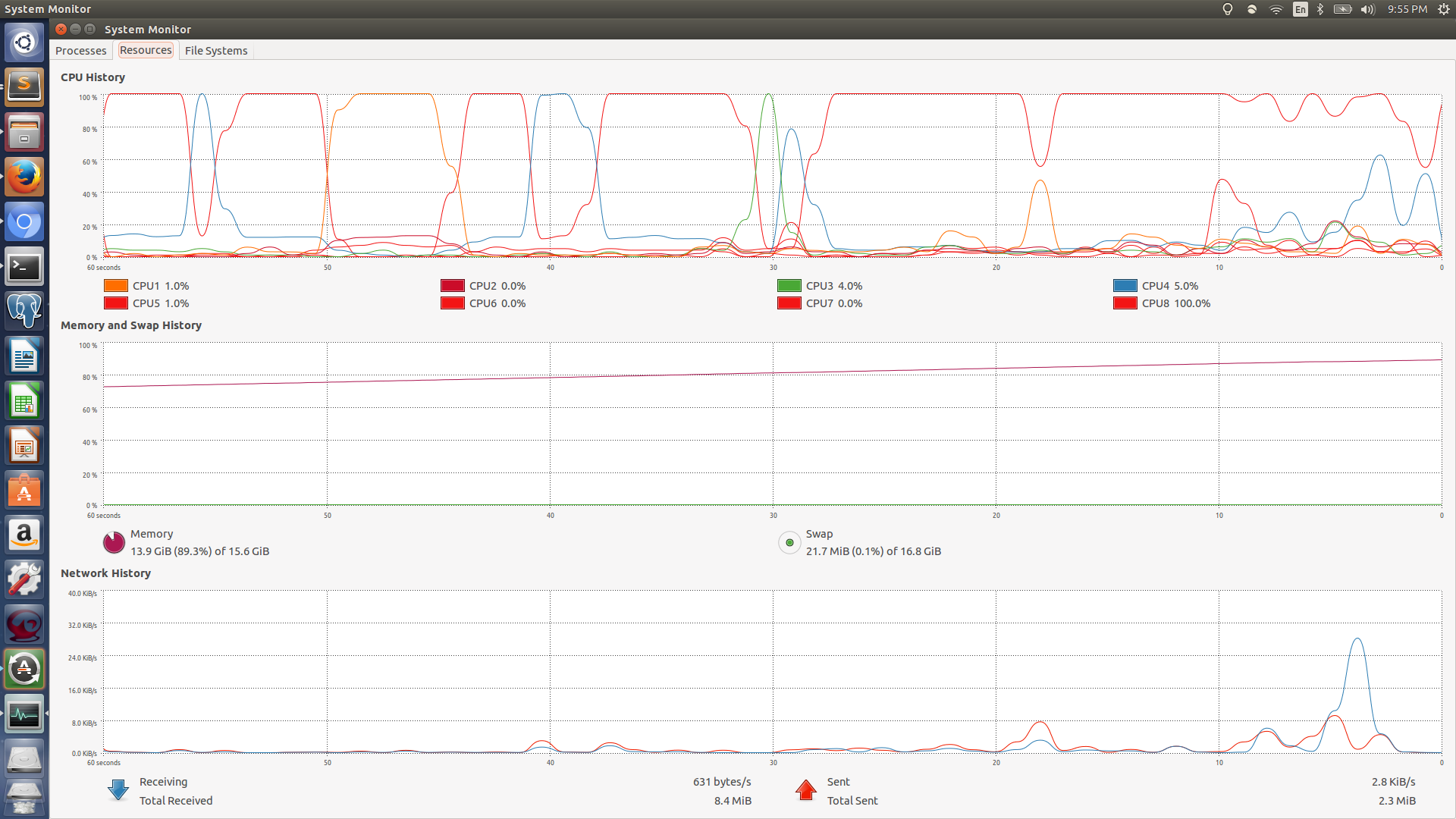

So I have been trying to read a 3.2GB file in memory using pandas read_csv function but I kept on running into some sort of memory leak, my memory usage would spike 90%+.

So as alternatives

I tried defining

dtypeto avoid keeping the data in memory as strings, but saw similar behaviour.Tried out numpy read csv, thinking I would get some different results but was definitely wrong about that.

Tried reading line by line ran into the same problem, but really slowly.

I recently moved to python 3, so thought there could be some bug there, but saw similar results on python2 + pandas.

The file in question is a train.csv file from a kaggle competition grupo bimbo

System info:

RAM: 16GB, Processor: i7 8cores

Let me know if you would like to know anything else.

Thanks :)

EDIT 1: its a memory spike! not a leak (sorry my bad.)

EDIT 2: Sample of the csv file

Semana,Agencia_ID,Canal_ID,Ruta_SAK,Cliente_ID,Producto_ID,Venta_uni_hoy,Venta_hoy,Dev_uni_proxima,Dev_proxima,Demanda_uni_equil

3,1110,7,3301,15766,1212,3,25.14,0,0.0,3

3,1110,7,3301,15766,1216,4,33.52,0,0.0,4

3,1110,7,3301,15766,1238,4,39.32,0,0.0,4

3,1110,7,3301,15766,1240,4,33.52,0,0.0,4

3,1110,7,3301,15766,1242,3,22.92,0,0.0,3

EDIT 3: number rows in the file 74180465

Other then a simple pd.read_csv('filename', low_memory=False)

I have tried

from numpy import genfromtxt

my_data = genfromtxt('data/train.csv', delimiter=',')

UPDATE The below code just worked, but I still want to get to the bottom of this problem, there must be something wrong.

import pandas as pd

import gc

data = pd.DataFrame()

data_iterator = pd.read_csv('data/train.csv', chunksize=100000)

for sub_data in data_iterator:

data.append(sub_data)

gc.collect()

EDIT: Piece of Code that worked. Thanks for all the help guys, I had messed up my dtypes by adding python dtypes instead of numpy ones. Once I fixed that the below code worked like a charm.

dtypes = {'Semana': pd.np.int8,

'Agencia_ID':pd.np.int8,

'Canal_ID':pd.np.int8,

'Ruta_SAK':pd.np.int8,

'Cliente_ID':pd.np.int8,

'Producto_ID':pd.np.int8,

'Venta_uni_hoy':pd.np.int8,

'Venta_hoy':pd.np.float16,

'Dev_uni_proxima':pd.np.int8,

'Dev_proxima':pd.np.float16,

'Demanda_uni_equil':pd.np.int8}

data = pd.read_csv('data/train.csv', dtype=dtypes)

This brought down the memory consumption to just under 4Gb

Answer

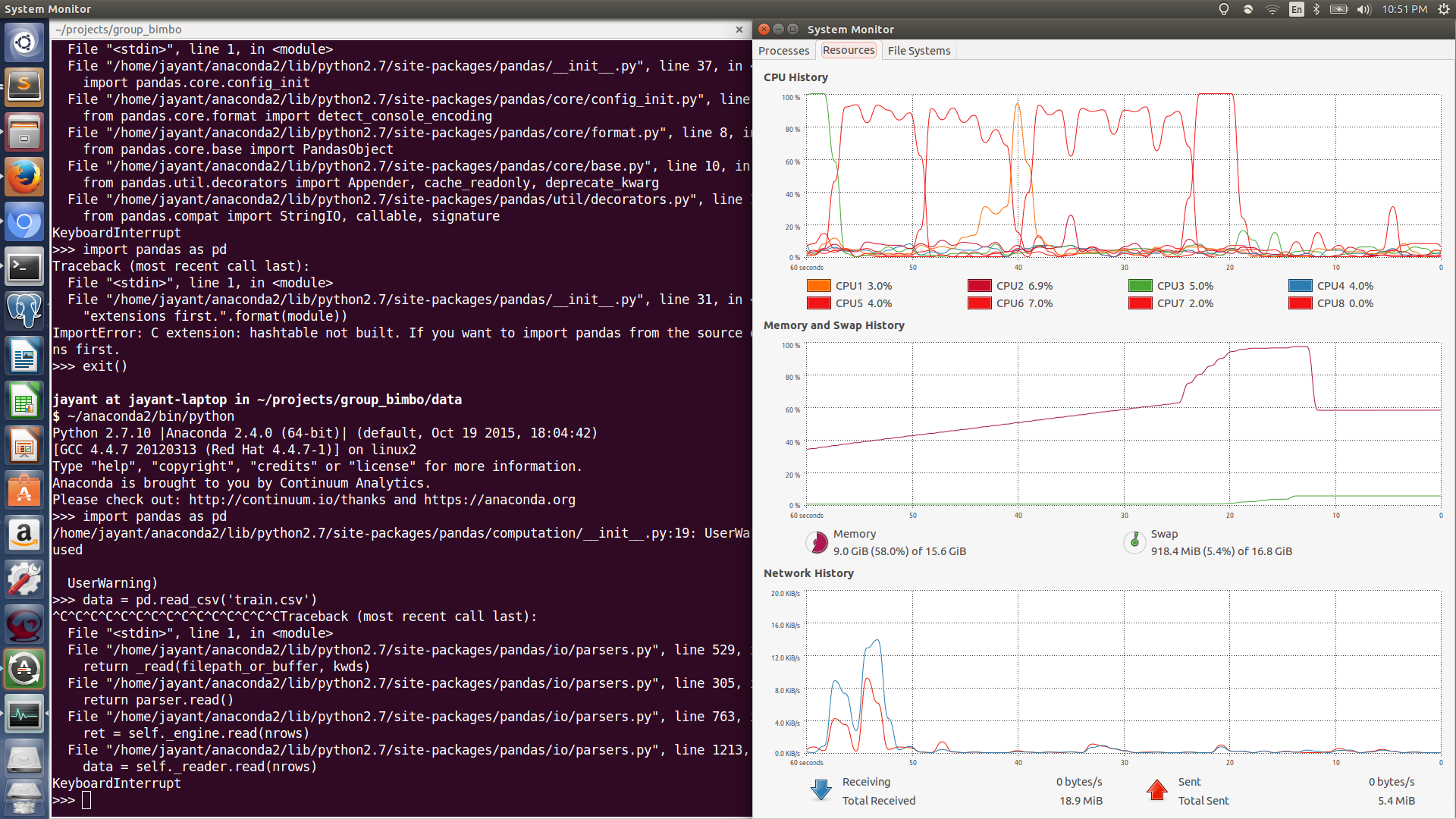

Based on your second chart, it looks as though there's a brief period in time where your machine allocates an additional 4.368 GB of memory, which is approximately the size of your 3.2 GB dataset (assuming 1GB overhead, which might be a stretch).

I tried to track down a place where this could happen and haven't been super successful. Perhaps you can find it, though, if you're motivated. Here's the path I took:

This line reads:

def read(self, nrows=None):

if nrows is not None:

if self.options.get('skip_footer'):

raise ValueError('skip_footer not supported for iteration')

ret = self._engine.read(nrows)

Here, _engine references PythonParser.

That, in turn, calls _get_lines().

That makes calls to a data source.

Which looks like it reads in in the form of strings from something relatively standard (see here), like TextIOWrapper.

So things are getting read in as standard text and converted, this explains the slow ramp.

What about the spike? I think that's explained by these lines:

ret = self._engine.read(nrows)

if self.options.get('as_recarray'):

return ret

# May alter columns / col_dict

index, columns, col_dict = self._create_index(ret)

df = DataFrame(col_dict, columns=columns, index=index)

ret becomes all the components of a data frame`.

self._create_index() breaks ret apart into these components:

def _create_index(self, ret):

index, columns, col_dict = ret

return index, columns, col_dict

So far, everything can be done by reference, and the call to DataFrame() continues that trend (see here).

So, if my theory is correct, DataFrame() is either copying the data somewhere, or _engine.read() is doing so somewhere along the path I've identified.