Suitability of parallel computation for comparisons over a large dataset

An answer to this question on Stack Overflow.

Question

Suppose the following hypothetical task:

I am given a single integer A (say, 32 bit double) an a large array of integers B's (same type). The size of the integer array is fixed at runtime (doesn't grow mid-run) but of arbitrary size except it can always fit inside either RAM or VRAM (whichever is smallest). For the sake of this scenario, the integer array can sit in either RAM and VRAM; ignore any time cost in transferring this initial data set at start-up.

The task is to compare A against each B and to return true only if the test is true for against ALL B's, returning false otherwise. For the sake of this scenario, let is the greater than comparison (although I'd be interested if your answer is different for slightly more complex comparisons).

A naïve parallel implementation could involve slicing up the set B and distributing the comparison workload across multiple core. The core's workload would then be entirely independent save for when a failed comparison would interrupt all others as the result would immediately be false. Interrupts play a role in this implementation; although I'd imagine an ever decreasing one probabilistically as the array of integers gets larger.

My question is three-fold:

Would such a scenario be suitable for parallel-processing on GPU. If so, under what circumstances? Or is this a misleading case where the direct CPU implementation is actually the fastest?

Can you suggest an improved parallel algorithm over the naïve one?

Can you suggest any reading to gain intuition on deciding such problems?

Answer

I think this is a situation where you'll derive minimal benefit from the use of a GPU. I also think this is a situation where it'll be difficult to get good returns on any form of parallelism.

Comments on the speed of memory versus CPUs

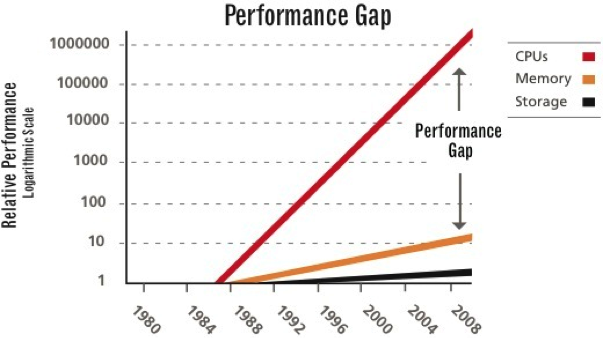

Why do I believe this? Behold: the performance gap (in terrifyingly unclear units).

The point here is that CPUs have gotten very fast. And, with SIMD becoming a thing, they are poised to become even faster.

In the meantime, memory is getting faster slower. Not shown on the chart are memory buses, which ferry data to/from the CPU. Those are also getting faster, but at a slow rate.

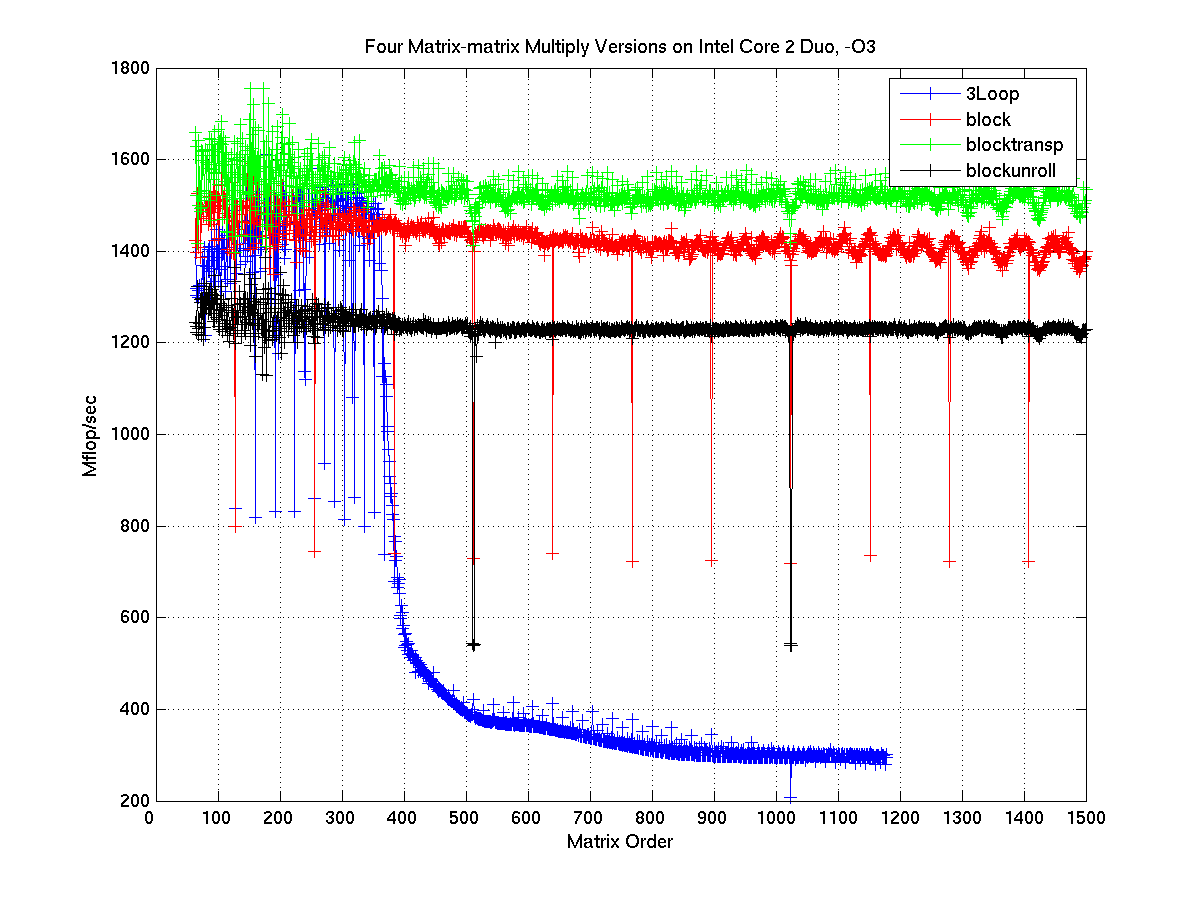

Since RAM and hard drives are slow, CPUs try to store data in "little RAMs" known as the L1, L2, and L3 caches. These caches are super-fast, but super-small. However, if you can design an algorithm to repeatedly use the same memory, these caches can speed things up by an order of magnitude. For instance, this site discusses optimizing matrix multiplication for cache reuse. The speed-ups are dramatic:

The speed of the naive implementation (3Loop) drops precipitously for everything about a 350x350 matrix. Why is this? Because double-precision numbers (8 bytes each) are being used, this is the point at which the 1MB L2 cache on the test machine gets filled. All the speed gains you see in the other implementations come from strategically reusing memory so this cache doesn't empty as quickly.

Caching in your algorithm

Your algorithm, by definition, does not reuse memory. In fact, it has the lowest possible rate of memory reuse. That means you get no benefit from the L1, L2, and L3 caches. It's as though you've plugged your CPU directly into the RAM.

How do you get data from RAM?

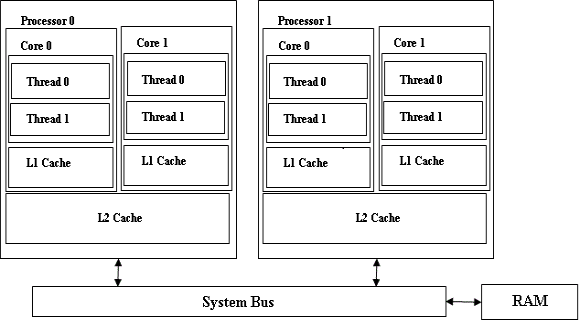

Here's a simplified diagram of a CPU:

Note that each core has it's own, dedicated L1 cache. Core-pairs share L2 caches. RAM is shared between everyone and accessed via a bus.

This means that if two cores want to get something from RAM at the same time, only one of them is going to be successful. The other is going to be sitting there doing nothing. The more cores you have trying to get stuff from RAM, the worse this is.

For most code, the problem's not too bad since RAM is being accessed infrequently. However, for your code, the performance gap I talked about earlier, coupled your algorithm's un-cacheable design, means that most of your code's time is spent getting stuff from RAM. That means that cores are almost always in conflict with each other for limited memory bandwidth.

What about using a GPU?

A GPU doesn't really fix things: most of your time will still be spent pulling stuff from RAM. Except rather than having one slow bus (from the CPU to RAM), you have two (the other being the bus from the CPU to the GPU).

Whether you get a speed up is dependent on the relative speed of the CPU, the GPU-CPU bus, and the GPU. I suspect you won't get much of a speed up, though. GPUs are good for SIMD-type operations, or maps). The operation you describe is a reduction or fold): an inherently non-parallel operation. Since your mapped function (equality) is extremely simple, the GPU will spend most of its time on the reduction operation.

tl;dr

This is a memory-bound operation: more cores and GPUs are not going to fix that.