efficient MPI collective non-blocking communication

An answer to this question on the Scientific Computing Stack Exchange.

Question

I am facing a problem with MPI (Fortran). I have a really big matrix at each node and they differ at different nodes. At some point of my calculations, each node needs the matrix from all other nodes, so that the MPI communication is between every node. Each node is a sender at the same time it is also a receiver. The matrix size is already too big to allow me to simply collect data from all other nodes. Currently what I am doing is to combine MPI_ISEND with MPI_RECV, but I think this might not be the best solution, thus, I would like to consult you experts.

To be more specific, the matrix on each node is of same size, but they are not replica of each other, their elements are different at different nodes. At some point of time, each node needs the matrix from all other nodes. Thus, each node shall send its own matrix at the same receive data from all other nodes.

I would be really grateful to you valuable suggestions and maybe solutions.

Answer

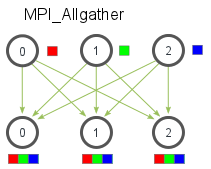

What you're describing (each node needs every other node's data) is exactly implemented by MPI_Allgather:

You might expect MPI_Allgather to be more efficient than numerous MPI_ISEND/MPI_IRECV pairings since making MPI aware of your intended communication pattern could allow it to optimize information flow.

On the other hand, if you have wide variation in per-node calculation time, the MPI_ISEND/MPI_IRECV pairings would allow you to begin transferring information immediately as the nodes complete their operations, which might reduce latency.

Some of these trade-offs are discussed in the answers to this question.

The best thing to do is to profile your communication using, say, Vampir, and address the problems you find. Otherwise, try both and compare their performance.