MPI data broadcast or not in C

An answer to this question on Stack Overflow.

Question

I have two slightly different but getting the same results MPI code.

The first one is from an open-source package having several data exchange steps in between:

int main ( int argc, char **argv )

{

int i,j,nx=600,nz=300,NP, MYID;

int idum[2];

float v[420][720];

for (i=0;i<420;i++){

for (j=0;j<720;j++){

if(i<161) { v[i][j] = 2800.0; }

else { v[i][j] = 5200.0; }

}

}

MPI_Init ( &argc, &argv );

MPI_Comm_size ( MPI_COMM_WORLD, &NP );

MPI_Comm_rank ( MPI_COMM_WORLD, &MYID );

if(MYID==0){

idum[0] = nx;

idum[1] = nz;

}

MPI_Barrier(MPI_COMM_WORLD);

MPI_Bcast(&idum,2,MPI_INT,0,MPI_COMM_WORLD);

MPI_Bcast(&v,420*720,MPI_FLOAT,0,MPI_COMM_WORLD);

MPI_Barrier(MPI_COMM_WORLD);

nx = idum[0];

nz = idum[1];

for (i=0;i<5;i++){

printf("id=%d,v[%d][350]=%f,\n",MYID,i*100+19,v[i*100+19][350]);

}

printf("nx=%d,nz=%d\n",nx,nz);

MPI_Finalize();

exit(0);

}

I run the code using mpirun with 4 cores. The results are:

id=0,v[19][350]=2800.000000,

id=0,v[119][350]=2800.000000,

id=0,v[219][350]=5200.000000,

id=0,v[319][350]=5200.000000,

id=0,v[419][350]=5200.000000,

nx=600,nz=300

id=1,v[19][350]=2800.000000,

id=1,v[119][350]=2800.000000,

id=1,v[219][350]=5200.000000,

id=1,v[319][350]=5200.000000,

id=1,v[419][350]=5200.000000,

nx=600,nz=300

id=2,v[19][350]=2800.000000,

id=2,v[119][350]=2800.000000,

id=2,v[219][350]=5200.000000,

id=2,v[319][350]=5200.000000,

id=2,v[419][350]=5200.000000,

nx=600,nz=300

id=3,v[19][350]=2800.000000,

id=3,v[119][350]=2800.000000,

id=3,v[219][350]=5200.000000,

id=3,v[319][350]=5200.000000,

id=3,v[419][350]=5200.000000,

nx=600,nz=300

But I think the data exchange part is a little "redundant?", so I simplify the above code as:

int main ( int argc, char **argv )

{

int i,j,nx=600, nz=300, NP=0, MYID;

float v[420][720];

for (i=0;i<420;i++){

for (j=0;j<720;j++){

if(i<161) { v[i][j] = 2800.0; }

else { v[i][j] = 5200.0; }

}

}

MPI_Init ( &argc, &argv );

MPI_Comm_size ( MPI_COMM_WORLD, &NP );

MPI_Comm_rank ( MPI_COMM_WORLD, &MYID );

for (i=0;i<5;i++){

printf("id=%d,v[%d][350]=%f,\n",MYID,i*100+19,v[i*100+19][350]);

}

printf("nx=%d,nz=%d\n",nx,nz);

MPI_Finalize();

exit(0);

}

I get the same results as the first code.

Which one of the two codes is correct? If both are correct, which one is better? Why do we need to have the data exchange lines in the first code, or don't have to?

Answer

The question I think you're asking, based on your code, is:

A. Better to have each node do redundant work

B. Better to have one node do the work distribute the results.

The answer is usually A.

To see why, consider these Latency Numbers Every Programmer Should Know:

![Latency Numbers Every Programmer Should Know]]1

Note that CPU operations take essentially no time (~1ns) and that doing a decently complex operation on 1KB of local memory takes 3 microseconds. On the other hand, sending 1KB of data over a network takes 10 microseconds. Therefore, you're moving (at least) three times faster by doing the operation locally.

But it's even better than that. In parallel computing we call the "critical path" the longest series of serial operations we are obliged to do. In a program where we calculate then broadcast, the total time is the sum of these two operations. In this example, that's 13 microseconds.

Communication also introduces unpredictability: message transmission time can depend on message length in nonlinear ways:

Communication bandwidth is also a function of the number of processes communicating:

So the question is: do you want to delegate the speed of your computation to a slow network with unknown timing properties? Or do you want to do redundant computation locally, where you know it will be fast?

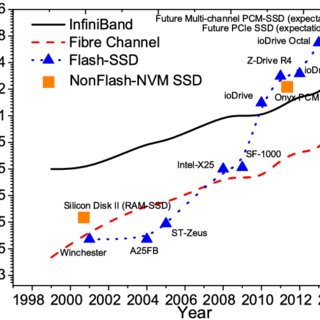

And if you compare trends in network versus memory bandwidth, the push to avoid communication is only increasing:

This is why communication avoiding algorithms are a bit of a hot topic. Where that means avoiding not just communication between network nodes, but also between RAM and CPU cache, and even different levels of the CPU cache. The speeds of various parts of computers are diverging from each other exponentially, so it is more and more important to avoid slow parts of your system as much as possible.