Is there an optimization scheme/algorithm that converges, for this non-convex scenario but with some special properties

An answer to this question on the Scientific Computing Stack Exchange.

Question

I have a smooth function $f(x) = \frac{g(x)}{h(x)}$ that is the ratio of two smooth convex functions $g(x)$ and $h(x)$. It is known that $f(x)$ has a global minimum, achieved at the unique point $x_0$. Also is known that $f(x)$ has countably many local minima, and function $f(x)$ value at no two local minima, is the same.

Question: Is there any optimization algorithm for this scenario, which converges to the global minimum. I am hoping there is some variant of gradient descent or the likes. Appreciate any references/solutions.

Answer

Despite your claim that these functions have "special properties", the properties you've supplied still leave f and g extremely general. This means the answers you're going to get must be correspondingly general. If, for instance, you know that g is always positive or f is quadratic, or g is some kind of positive semi-definite matrix, and so on, then you should ask a new question specifying this critical information from the start.

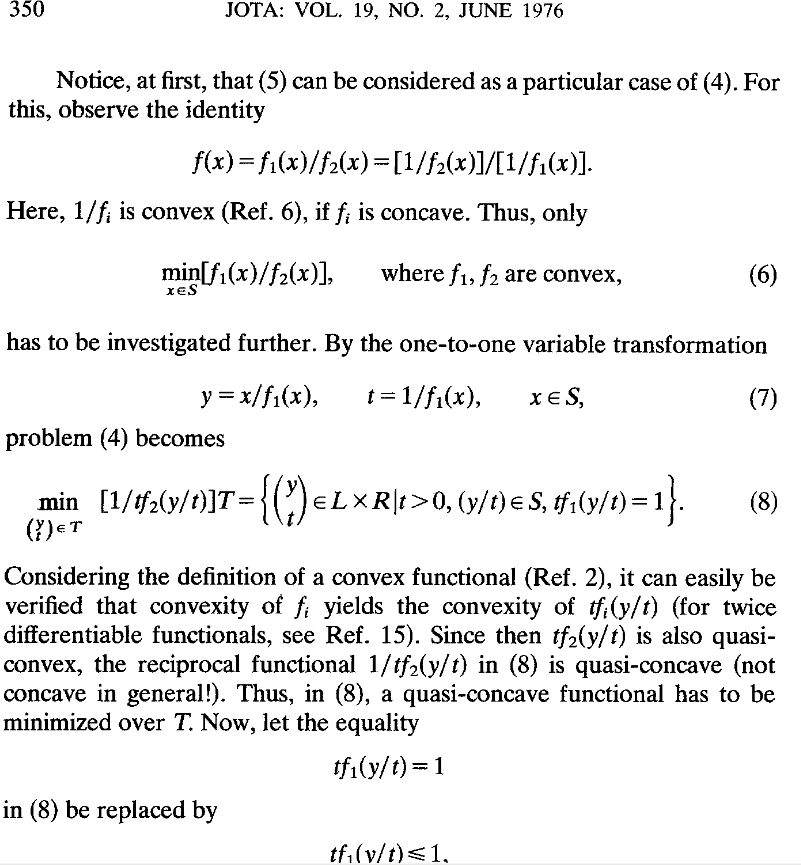

What you're trying to do is convex-convex fractional programming. Schaible's 1976 paper "Minimization of ratios" explains how such a problem can be transformed into a quasi-convex problem

So you can make this into a quasi-convex problem. That means that you can use bisection to solve the problem to $\epsilon$ tolerance in $\lceil log_2((u-l)/\epsilon)\rceil$ steps where $l$ and $u$ bound the range of the $t$ variable shown above.

Depending on the functions involved, you may be able to use cvxpy to solve the problem. Some examples showing bisection of quasiconvex functions are here and here.