Deep learning using Distributed linear algebra

An answer to this question on the Scientific Computing Stack Exchange.

Question

Is there any deep learning library based on Trillinos or Petsc linear algebra?

Answer

When you talk about distributed linear algebra, I take this to mean between nodes.

This probably wouldn't be a good design choice.

Why would you distribute the problem?

- Maybe it will make training faster!

- Maybe I can learn on bigger datasets!

Let's talk about (1).

The K80's internal bandwidth is about 480GB/s (gigabytes per second). The Haswell architecture is about 102GB/s. The first-generation TPUs had a bandwidth of about 34GB/s.

In comparison, Infiniband, which is the interconnect used in many supercomputers, has a bandwidth of just 25GB/s.

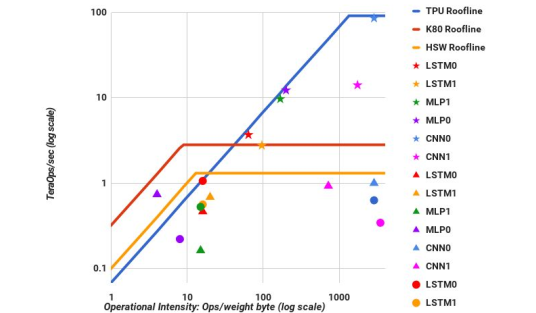

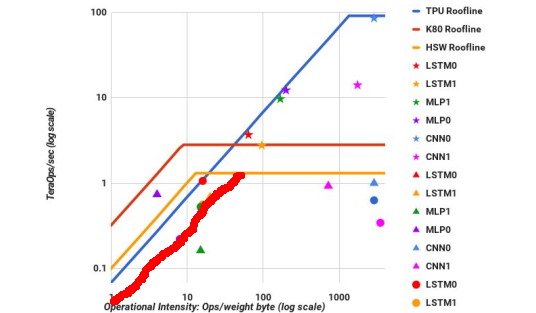

If we look at a roofline chart of deep learning models, this provides some useful information:

The vertical axis is performance while the horizontal axis is memory reuse (operations per byte once it is loaded from memory).

The diagonal lines are regions where the kernels are limited by the speed of memory. The horizontal lines indicate limitations from the speed of floating-point operations. Using Infiniband at the speed I state means drawing a new diagonal line:

There are roughly two groups of kernels: those that are memory-limited (on the left-hand side) and those that are computation-limited (on the right-hand side). For the kernels on the RHS, the only way to make them faster is to have faster floating-point operations. That's why we built the TPU: it was physically impossible to accelerate the kernels in any other way.

The memory-bound kernels can be accelerated in two ways:

- By increasing arithmetic intensity (memory reuse), thereby sliding them up and to the right so that they're blocked by one of the horizontal rooflines.

- By increasing the speed of the memory they're bound by. This is often achieved by improving cache utilization or the locality of an operation.

As you can see, Infiniband does neither of these things. Therefore, this kind of distributed operation is unlikely to be useful for accelerating deep learning.

What about the second possibility: training larger models? Larger models require greater training time and training is expensive. Couple this with having to train slower and it's a no-go.

What about limiting yourself to a single node? Well, in that case there are simpler libraries than PETSc (e.g. MKL) that perform this same operation, so there's no need to pull in the PETSc dependency.

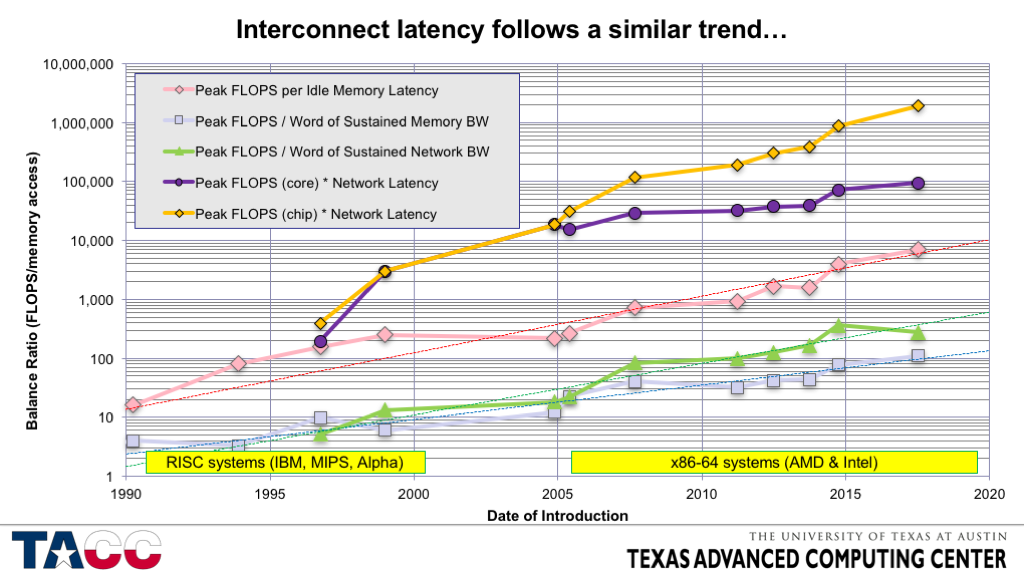

To address one of the comments, since 1990 FLOPS per socket have increased at about 50-60% per year while interconnect bandwidth has increased at only 20% per year and interconnect latency has only decreased at about 20% per year. See this chart, for instance:

This means that if you want peak performance you need to avoid interconnects and this will become more true over time. Research in communication avoiding algorithms seeks to find ways of achieving good performance in the face of this reality. In deep learning, distributed training methods have a similar motivation.

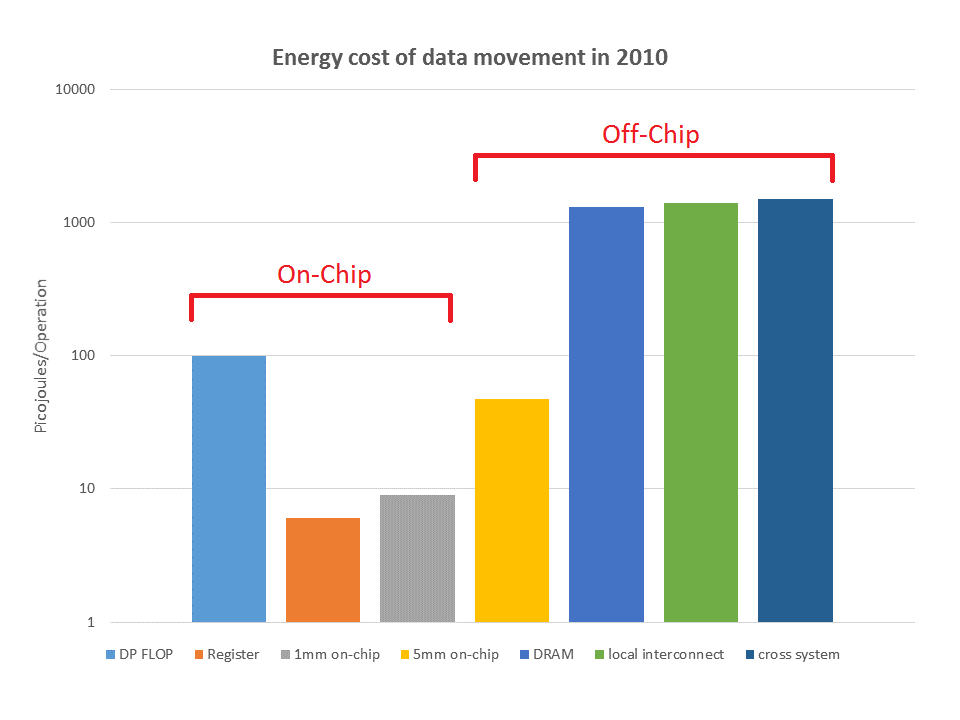

Aside from the algorithmic costs of communication, it's expensive in terms of energy:

Collectively, these trends are also changing the way we design computers. Summit, for instance, clusters a lot of compute power per node to reduce costs.